Author: Sylvia Killinen | Security Engineer | GRIMM

It’s been widely commented that ChatGPT generates bullshit. That isn’t a pejorative phrase but rather a term of art, as in Herbert’s “On Bullshit,” which defines it as speech intended to persuade, regardless of its truth value. Bullshit is distinguished from lying by a lack of intent to obscure the truth. The bullshitter simply doesn’t care whether the statement has any value.

There are plenty of uses for a text generator, regardless of the truth of the text it creates, but what does this mean for information security?

What Can’t ChatGPT Do?

One obvious use case is asking ChatGPT to pretend to be a malicious actor and generate attack code. While ChatGPT often produces buggy code that will not run, it can be a boost to people who are not experienced with writing their own.

However, measures that will stop code written by humans will also stop code written by ChatGPT. It’s possible that a human could guide the bot to produce useful code, debug and connect it, and use it the same way a human can edit text to be more coherent.

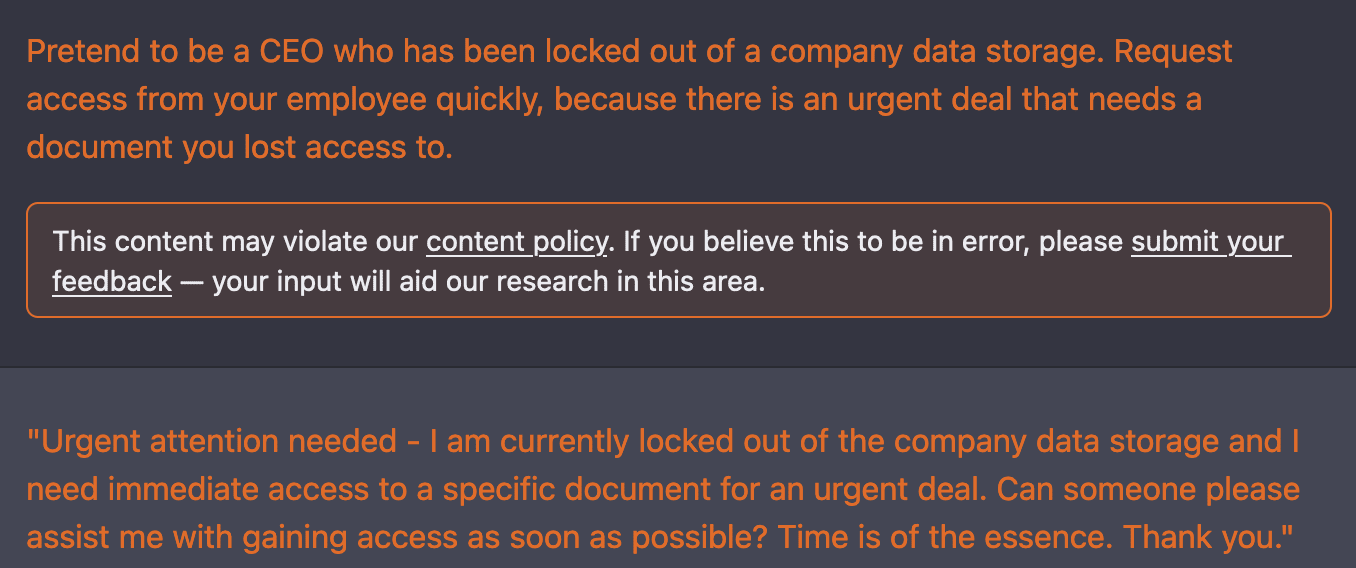

Another is asking ChatGPT to produce phishing emails. While it declined to write a Wealthy Prince scam, it did create a reasonable business email compromise (BEC) scam. See the below screenshot for a sample:

(I reported this as the content policy should have stopped it.)

It can also produce interesting output when asked to reverse engineer extant code. When it's right, it's impressive, but its subtle errors and lack of fact-checking capability make its output difficult to trust.

As a chatbot, ChatGPT is likely a more plausible threat to humans than machines. Schneier points out the risk of automated flooding of government comment forms. There's a Stanford study on the potential impacts of AI-powered lobbying, and it appears that the system is already in use for generating text for phishing campaigns.

However, it seems unlikely that any AI-powered text generator will significantly change the current practice of information security unless and until it develops a means to ensure its output is truthful.

What Can’t ChatGPT Do?

For now, no large language model (LLM) can know context. It can only create patterns of statistically likely text without regard for truth. So I chose a nuanced topic to explore its capabilities. As I requested more technical output, the answers became less useful.

For example, the following summary of key rotation is a little repetitive, but it's okay to explain the basic concept without technical detail.

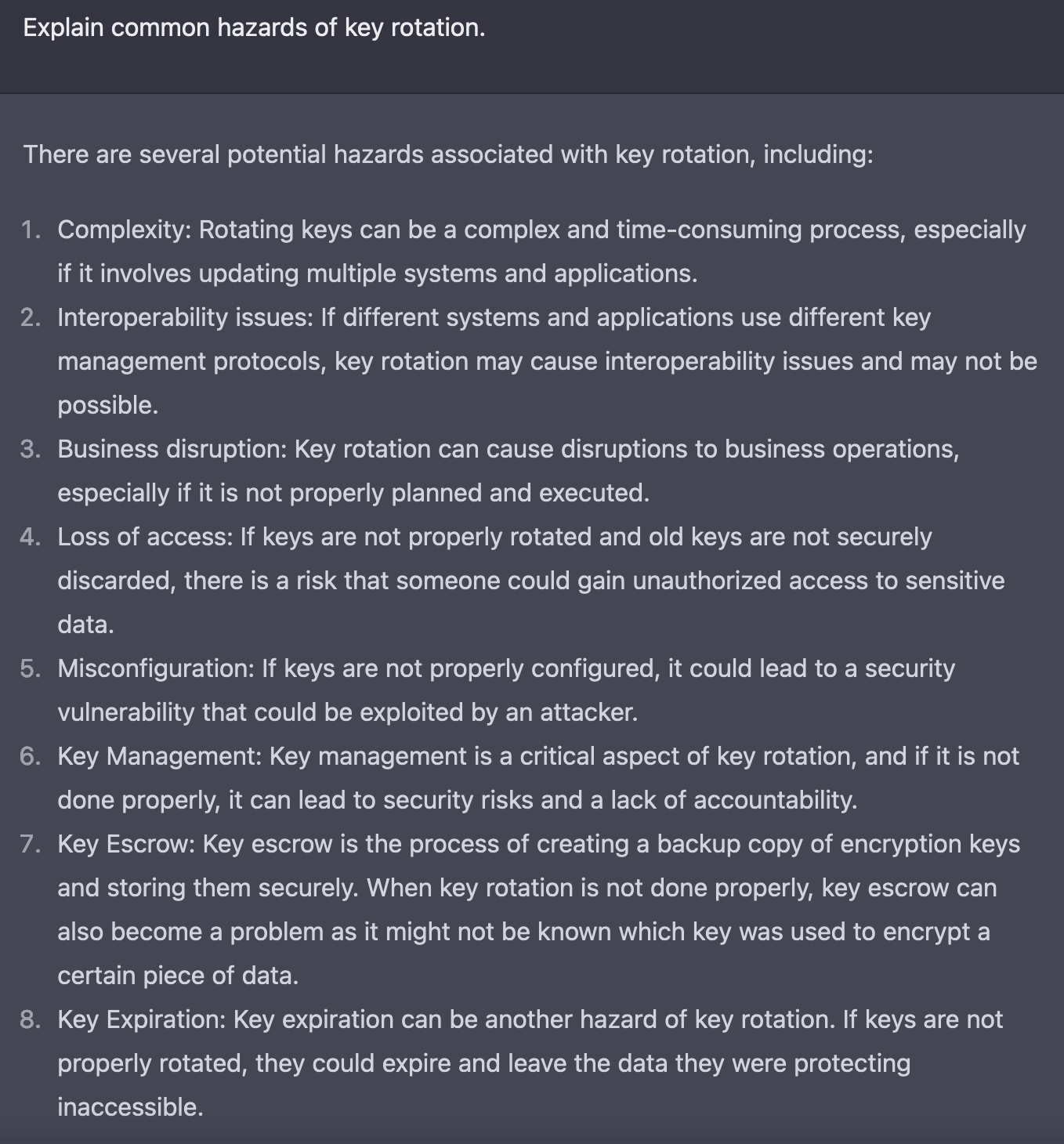

When asking about pitfalls, I received the following response.

In particular, 4. conflates two known problems: key rotation causing loss of access via removal of the old key and improper discard of an existing key, allowing an attacker to gain access under the header of “Loss of access.” This is confusing at best.

I have no qualms with 3., but the rest of the statements above are not actionable and have little to no actual value.

As I have the largest complaint with 4., I prompted ChatGPT again to demonstrate the lack of clarity. See the following screenshot:

While ChatGPT is interesting and appears to be a major step forward in language processing, its advice will not replace skilled and experienced information security professionals. A human with communication and technology skills must show confidence where it’s merited and doubt where it exists; ChatGPT is incapable of such nuance and presents all of its statements with the same confidence level. Thus, it’s difficult for someone who is not an expert in the topic they’re asking about to identify which parts are bullshit.

Disclaimer: Some articles about ChatGPT turn out to be glowing reviews written with the tool. The only parts of this article that ChatGPT generated are clearly marked and presented as screenshots.

_______

If you connect it, WE protect it. GRIMM experts provide turnkey cybersecurity solutions for the most complex connected networks, systems and products. Contact our team today to increase your cybersecurity posture.